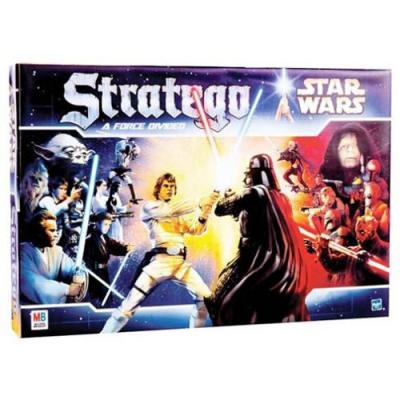

The attraction lies mainly in the bluffing element of the game. described the board game as loosely a cross between Chess, Stratego, and Blitzkrieg. Millions of copies of this classic board game have been sold worldwide. Object of the game is to get rid of your own evil ghosts, kill your opponents good ghosts, or move one of your good ghost off the board from one of your opponents corner squares. Tyrion also uses cyvasse to play a meta-game with his opponents. Stratego is all about tactics, strategy and cold hard bluff, a combination of chess and poker. Moving into an opponents ghost kills the ghost. Each turn a player moves one of his ghost one square vertically or horizontally. Moreover, unlike traditional double oracle methods, the exploitability of the meta-distribution for AODO decreases with every iteration.Each player has 4 good ghosts and 4 evil ghosts, but only the player can see which ghost are good or evil (like in Stratego), place at the back of a 6圆 size board. Because there are a finite number of pure strategies in the original game, AODO will terminate with a meta-distribution that is a Nash equilibrium in the original game. The team first introduces Anytime Optimal DO (AODO), a tabular no-regret double-oracle algorithm guaranteed to converge to a Nash equilibrium (settling on an optimal game-strategy solution). The objective of the game is to find and capture the opponent's Flag, or to capture so many enemy pieces that the opponent cannot make any further moves. Each player controls 40 pieces representing individual officer and soldier ranks in an army. A movable piece is a piece that still has at least one legal move. Stratego is a strategy board game for two players on a board of 10×10 squares. at least one of the players has no movable piece anymore. Be able to learn the classic Atari game Montezumas revenge (based on just visual inputs and standard controls) and. We present a reinforcement learning version of AODO, Anytime Optimal PSRO (AO-PSRO), that outperforms PSRO on certain games and tends to not increase exploitability, unlike PSRO. According to the official rules of the International Stratego Federation, it is impossible to capture all 40 pieces of your opponent: 12 The end of the match 12.1 A game ends when: one of the flags is captured. Stratego is a game with two distinct challenges: it requires long-term strategic thinking (like chess) and also requires players to deal with incomplete information (like poker).We present a method for finding the minimum exploitability meta-distribution in AODO via a no-regret procedure against best responses, called RM-BR DO.AODO is guaranteed to not increase exploitability from one iteration to the next. The goal of the game is to capture the flag of the opponent or capture all of his pieces. Master of the Flag is a free online strategy game similar to the board game Stratego.

We present a tabular no regret double oracle algorithm, Anytime Optimal DO (AODO), that updates a no-regret algorithm against a new best response every iteration in the inner loop. Play Stratego online free against a computer.The team summarizes their work’s main contributions as: Prior to joining Meta, Calvin was a Brand Manager at The Walt Disney Company, leading their marketing efforts across original series, apps, and games. To address this issue, a research team from the University of California Irvine and DeepMind has proposed Anytime Optimal PSRO (AO-PSRO), a new PSRO variant for two-player zero-sum games that is guaranteed to converge to a Nash equilibrium while decreasing exploitability from iteration to iteration.

The objective of the game is to find and capture the opponents Flag, or to capture so many enemy pieces that the opponent cannot make any further moves.

Each player controls 40 pieces representing individual officers and soldiers in an army. Although PSRO will, in theory, eventually reach convergence, in large games it is often terminated early, which can result in the ending exploitability being even higher than it was at the beginning. Stratego is a board game for two players on a 10x10 square board. Introduced in 2017, Policy Space Response Oracles (PSRO) is a multi-agent RL method for finding approximate Nash equilibria (NE) that has achieved state-of-the-art performance in large imperfect-information two-player zero-sum games such as StarCraft and Stratego. Games have become a popular proving ground for AI techniques, particularly in the field of reinforcement learning (RL).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed